Quickstart

We will show here how to get started with the simulator, and some of things you can do with it.

We recommend looking at the notebook that we provide in the Python API repository for a more complete overview.

Installation

Follow the instructions in Installation to install the environment, and make sure you can run it.

Setting up a scene

We will start by starting a communication with VirtualHome and setting up the scene. For that, create a UnityCommunication object, and reset the environment.

# cd into virtualhome repo from simulation.unity_simulator import comm_unity YOUR_FILE_NAME = "" # Your path to the simulator port= "8080" # or your preferred port comm = comm_unity.UnityCommunication( file_name=YOUR_FILE_NAME, port=port ) env_id = 0 # env_id ranges from 0 to 6 comm.reset(env_id)

Visualizing the scene

We will now be visualizing the scene. Each environment has a set of cameras that we can use to visualize the scene. We will select a few and take screenshots from there.

# Check the number of cameras s, cam_count = comm.camera_count() s, images = comm.camera_image([0, cam_count-1])

This will create two images, stored as a list in images. The last corresponds to an overall view of the apartment

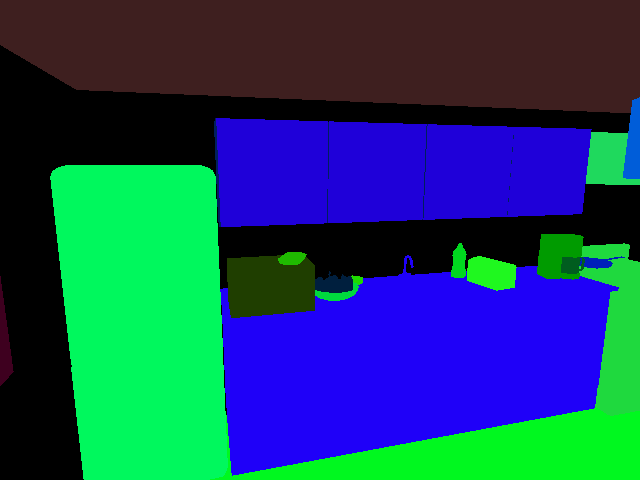

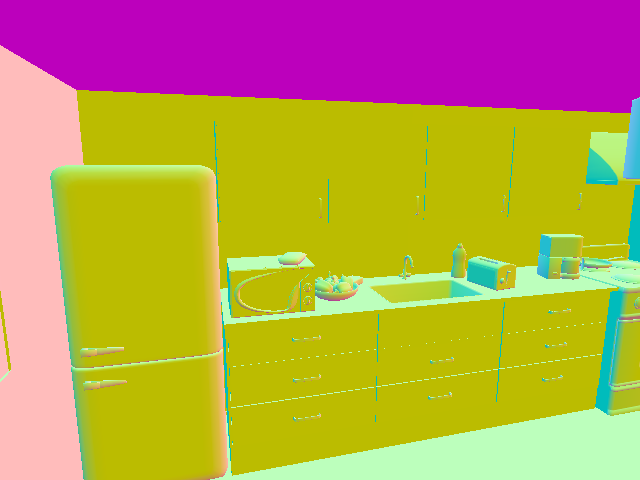

You can also add new cameras in the apartment, and visualize multiple modalities of those cameras

# Add a camera at the specified rotation and position comm.add_camera(position=[-3, 2, -5], rotation=[10, 15, 0]) # View camera from different modes modes = ['normal', 'seg_class', 'surf_normals'] images = [] for mode in modes: s, im = comm.camera_image([cam_count], mode=mode) images.append(im[0])

The content of images will be

Querying the scene

We will see here how to get information about the scene beyond images. Scenes in VirtualHome are represented as graphs. Let's start by querying the graph of the current scene.

# Reset the scene comm.reset() # Get graph s, graph = comm.environment_graph()

Here, graph is a python dictionary, containing nodes and edges. The nodes contain a list of all the objects in the scene, each represented by a dictionary with an id to identify the object, class_name with the name of the object, and information about the object states, 3D information etc. The edge contains a list of edges representing spatial relationships between the objects.

Modifying the scene

You can use the graph to modify the scene. This will allow to generate more diverse videos, or environments to train your agents. Let's try here to place some object inside the fridge, and open it.

# Get the fridge node fridge_node = [node for node in graph['nodes'] if node['class_name'] == 'fridge'][0] # Open it fridge_node['states'] = ['OPEN'] # create a new node new_node = { 'id': 1000, 'class_name': 'salmon', 'states': [] } # Add an edge new_edge = {'from_id': 1000, 'to_id': fridge_node['id'], 'relation_type': 'INSIDE'} graph['nodes'].append(new_node) graph['edges'].append(new_edge) # update the scene comm.expand_scene(graph)

If you take an image from the same camera as before, the scene should look like this:

Generating videos

So far we have been setting up an environment without agents. Let's start adding agents and generate videos with activities.

# Reset the scene comm.reset(0) comm.add_character('Chars/Female2') # Get nodes for salmon and microwave salmon_id = [node['id'] for node in g['nodes'] if node['class_name'] == 'salmon'][0] microwave_id = [node['id'] for node in g['nodes'] if node['class_name'] == 'microwave'][0] # Put salmon in microwave script = [ '<char0> [walk] <salmon> ({})'.format(salmon_id), '<char0> [grab] <salmon> ({})'.format(salmon_id), '<char0> [open] <microwave> ({})'.format(microwave_id), '<char0> [putin] <salmon> ({}) <microwave> ({})'.format(salmon_id, microwave_id), '<char0> [close] <microwave> ({})'.format(microwave_id) ] comm.render_script(script, recording=True, frame_rate=10)

This should have generated the following video:

We can also change the camera we are recording with, or record from multiple cameras using camera_mode argument, in render_script.

Multiagent Videos

We can also generate videos with multiple agents in them. A video will be generated for every agent

# Reset the scene comm.reset(0) # Add two agents this time comm.add_character('Chars/Male2', initial_room='kitchen') comm.add_character('Chars/Female4', initial_room='bedroom') # Get nodes for salmon and microwave, glass, faucet and sink salmon_id = [node['id'] for node in g['nodes'] if node['class_name'] == 'salmon'][0] microwave_id = [node['id'] for node in g['nodes'] if node['class_name'] == 'microwave'][0] glass_id = [node['id'] for node in g['nodes'] if node['class_name'] == 'waterglass'][-1] sink_id = [node['id'] for node in g['nodes'] if node['class_name'] == 'sink'][0] faucet_id = [node['id'] for node in g['nodes'] if node['class_name'] == 'faucet'][-1] # Put salmon in microwave script = [ '<char0> [walk] <salmon> ({}) | <char1> [walk] <glass> ({})'.format(salmon_id, glass_id), '<char0> [grab] <salmon> ({}) | <char1> [grab] <glass> ({})'.format(salmon_id, glass_id), '<char0> [open] <microwave> ({}) | <char1> [walk] <sink> ({})'.format(microwave_id, sink_id), '<char0> [putin] <salmon> ({}) <microwave> ({}) | <char1> [putback] <glass> ({}) <sink> ({})'.format(salmon_id, microwave_id, glass_id, sink_id), '<char0> [close] <microwave> ({}) | <char1> [switchon] <faucet> ({})'.format(microwave_id, faucet_id) ] comm.render_script(script, recording=True, frame_rate=10, camera_mode=["PERSON_FROM_BACK"])

The previous command will generate frames corresponding to the following video

Interactive agents

So far we have seen how to generate videos, but we can use the same command to deploy or train agents in the environment. You can execute the previous instructions one by one, and get an observation or graph at every step. or that, you don't need to generate videos or have animations, since it will slow down your agents. Use skip_animation=True to generate actions without animating them. Remember to turn off the recording mode as well.

# Reset the scene comm.reset(0) # Add two agents this time comm.add_character('Chars/Male2', initial_room='kitchen') comm.add_character('Chars/Female4', initial_room='bedroom') # Get nodes for salmon and microwave, glass, faucet and sink salmon_id = [node['id'] for node in g['nodes'] if node['class_name'] == 'salmon'][0] microwave_id = [node['id'] for node in g['nodes'] if node['class_name'] == 'microwave'][0] glass_id = [node['id'] for node in g['nodes'] if node['class_name'] == 'waterglass'][-1] sink_id = [node['id'] for node in g['nodes'] if node['class_name'] == 'sink'][0] faucet_id = [node['id'] for node in g['nodes'] if node['class_name'] == 'faucet'][-1] # Put salmon in microwave script = [ '<char0> [walk] <salmon> ({}) | <char1> [walk] <glass> ({})'.format(salmon_id, glass_id), '<char0> [grab] <salmon> ({}) | <char1> [grab] <glass> ({})'.format(salmon_id, glass_id), '<char0> [open] <microwave> ({}) | <char1> [walk] <sink> ({})'.format(microwave_id, sink_id), '<char0> [putin] <salmon> ({}) <microwave> ({}) | <char1> [putback] <glass> ({}) <sink> ({})'.format(salmon_id, microwave_id, glass_id, sink_id), '<char0> [close] <microwave> ({}) | <char1> [switchon] <faucet> ({})'.format(microwave_id, faucet_id) ] s, cc = comm.camera_count() for script_instruction in script: comm.render_script([script_instruction], recording=False, skip_animation=True) # Here you can get an observation, for instance s, im = comm.camera_image([cc-3])